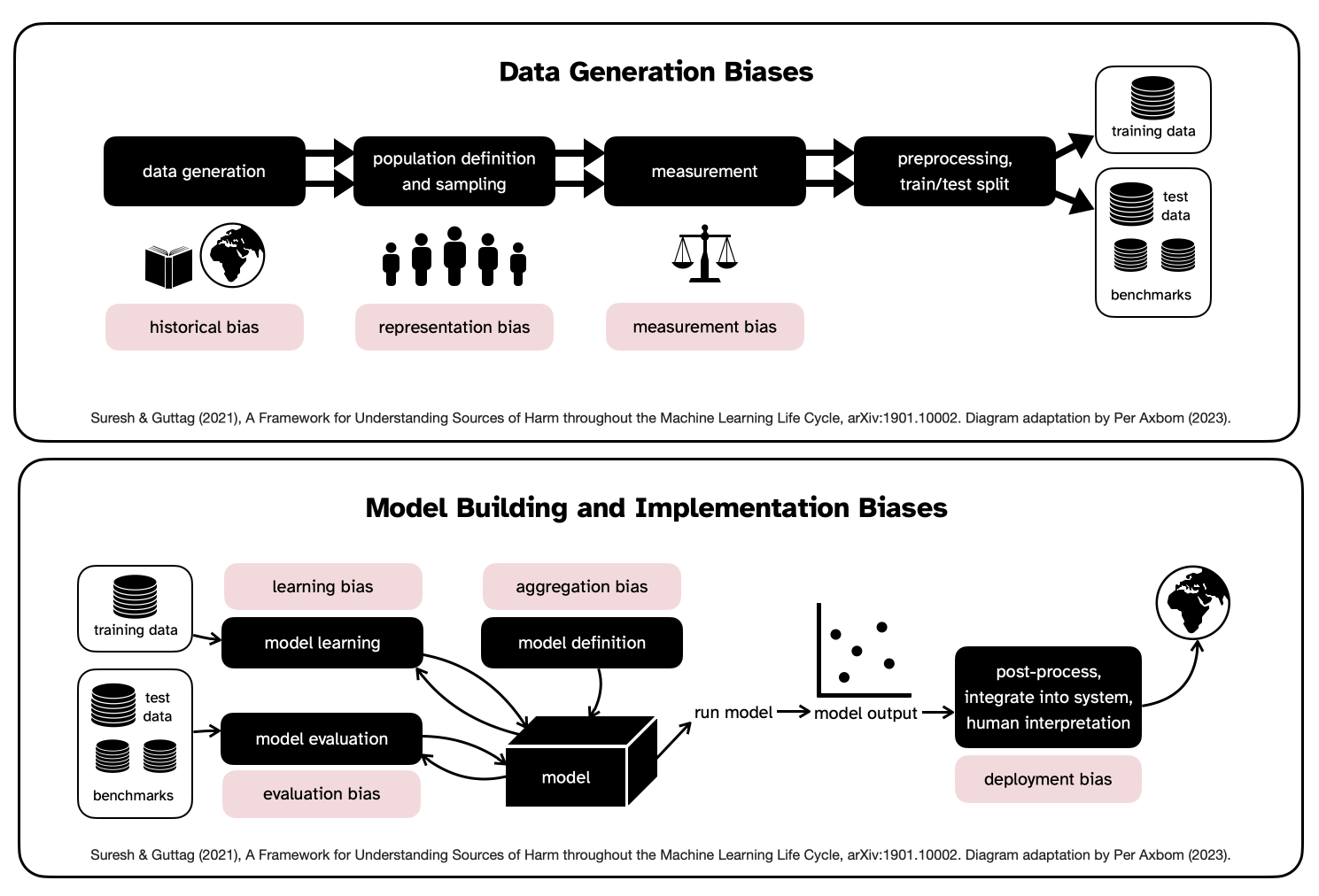

In the one-minute video below I am speaking seven languages. In truth, I can speak two of those. None of the audio is actually me speaking, even if it sounds very much like my voice. And the video? Despite what it looks like, that’s not me moving my mouth. In some ways impressive and in other ways quite unsettling.

A short compilation of seven different videos of me saying the same message, but each segment in a different language. All full-length videos can be viewed at the end of the post.

To make this happen I made a two-minute recording of myself talking in English about random stuff, and uploaded this as input to the generative tool provided by a service known as HeyGen. That video provided a voiceprint that can be used for more than 25 languages and counting. And honestly, the generated English voice really does sound like me.

With regards to the video, the scene where I appear to be standing is where I was actually standing when I recorded the original video. But the head, hand, eye and lip movements are all generated based on what I want my digital twin, or puppet, to say. I type text into a box and then the video with me, speaking in the selected language, comes out.

Note that it has no problem with motion in the background, which is something I wanted to test.

Your personal puppet will say anything

To be clear, I'm using the budget version of this service. Let’s say I want a full-body version of myself with customisable backgrounds. I would accomplish that by recording a few minutes of myself talking in a green screen studio. A bit more costly, but not a huge deal. For purposes of firing up the mind to imagine what’s possible, this will likely suffice.

I can see benefits here for many types of roles. Instructors, managers and presenters who need to make announcements or short tutorials. A digital puppet could also simply read out meeting minutes or reports, for people who’d rather not – or can not – read.

Benefits

Some advantages are easy to grasp:

- There is no need for retakes because there is no stuttering, stumbling or tripping over words. There are also no tech issues and hence time-savings are massive.

- If I want to change a word in what I’m saying in the video, that means I literally just change the word in the script. The sound of my virtual voice doesn’t change across the day as it does in real life, where I often have to re-record big segments to not draw attention to differences in voice pitch.

- I don’t need to set up a camera, fix the lighting or even get dressed. The visual scene is already established. Remember, you can set up an account to have a selection of scenes for different occasions. You just need a two-minute source video for each scene.

- I can generate versions of the same video in multiple languages, including ones I do not know. Yes, this benefit comes with some caveats.

Risks

So let’s consider the risks:

- If you auto-translate into languages you do not master, things may turn out grammatically wrong, confusing or even offensive in ways that you had not intended. Having someone fluent be in charge of quality assurance is still a must.

- Unconventional intonation is likely to happen from time to time as well, and did happen to me when I generated a Swedish voice. I master the language and was not entirely impressed by how some words were pronounced. You have to assume this is going on for all the languages.

- People may feel duped and lose trust if you aren’t transparent about what’s really going on. Clarity around tech-use will matter. And even when people are aware, they may feel that you are taking shortcuts and not putting in the work. Your specific context will matter.

- Over-use. Yes, when something is simple enough it’s likely it will be relied on in many cases where it actually isn’t that useful or relevant. This also impacts long-term quality and trust.

- When using a third-party service, make sure you understand how and under what conditions the material you use as input (your videos and your text content/scripts) may be used by the provider to fine-tune the service. You want to make sure that no sensitive content ends up in undesired places, and that you don’t sign away rights for other people to use your likeness.

- Who gets to control your digital twin? The smooth and friction-free experience of having a virtual puppet that you can easily delegate control over to others can of course backfire. You may find your likeness saying things that you haven’t signed off on. And if trust becomes an issue at some point, how will this type of virtual presentation continue to be trusted?

More than risk, synthetic video will of course also serve as tools for misuse and abuse. I mentioned the fact that controlling someone’s digital twin means you can create the appearance of them saying anything – and deceive a large number of people. This can include anything from thoughtless practical jokes to making someone incite violence or utter racist remarks.

On the topic of abuse I want to boost awareness of the fact that many young women are being subjected to appearing in pornographic media against their will, with very little power to object or bring abusers to justice. And even if laws and oversight efforts improve, remember that many lives are already ruined as soon as the abusive content is published. If you ever come across content like this, always report it to law enforcement immediately.

Consider how… but also why and when and who… and why not

More and more individuals and organisations will likely be using generative software to produce synthetic video. If you think there’s a benefit for you, you should start learning. But you should also be considering and managing use-cases where transparency, trustworthiness and risks come into play. One misstep could erase all the perceived benefits in seconds, if trust is completely lost.

And honestly, just as a human being on this planet, you should likely be aware of how easy and cheap it now is for anyone to grab anyone else’s face from online digital media and make them say anything. In any language.

P.S. I’m leaning more and more towards calling it puppet video, rather than synthetic video. What do you think?

All videos

I also have full-length versions of me saying the same message in seven different languages. I've included one version of me speaking Swedish in my own voice, for comparison. The video for that clip is still generated, and it's good to know that using audio as input for the video generation is also an option.

Further reading